Hello Everyone,

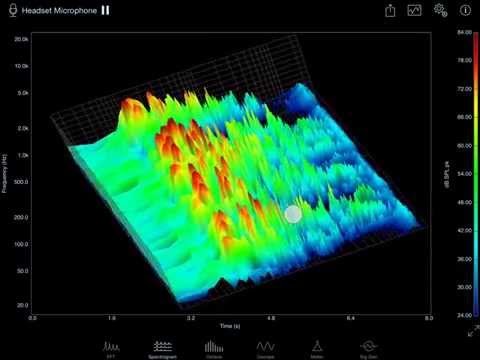

I’m a brand new OpenGL programmer and I have embarked on project to plot real time X,Y,Z data to generate a 3D waterfall. I am using VS 2010 C# with the SharpGL libraries.

So far I am successful at the following:

Setting up the environment

Creating a 3D graph with axis, labels, a “floor”, rotation, translation etc.

I am now dealing with plotting the data. As a first test, I have 10 arrays of 10 data points ( Y data) in an array, with the X increment being 0.1, and the z increment being 0.1. In other words I have 10 X and 10 Z values which are constant.

Now I can plot this as triangles using the following code (only the first and second rows are shown):

private void DrawDataTriangles(OpenGL gl)

{

gl.Begin(OpenGL.GL_TRIANGLES);

numberOfSlices = 1;

numberOfSamples = 10;

float xPosition = 0;

float xIncrement = 1 / numberOfSamples;

for (int i = 0; i < numberOfSlices; i++)

{

for (int j = 0; j < numberOfSamples; j++)

{

xPosition = -j / numberOfSamples;

//First triangle

gl.Color(0.1, 0.2, 0.3);

gl.Vertex(xPosition, amplitude_values_firstRow[j], 0);

gl.Vertex(xPosition, amplitude_values_SecondRow[j], 0.2);

gl.Vertex(xPosition - (xIncrement), amplitude_values_firstRow[j + 1], 0);

//Second triangle

gl.Color(0.6, 0.2, 0.7);

gl.Vertex(xPosition, amplitude_values_SecondRow[j], .2);

gl.Vertex(xPosition - (xIncrement), amplitude_values_firstRow[j + 1], 0);

gl.Vertex(xPosition - (xIncrement), amplitude_values_SecondRow[j + 1], .2);

}

}

gl.End();

}

This all works well except when I increase the number of samples. My real data set will have about 2048 Y values and there will be about 100 Z rows. If I try 20,000 samples then frames rates drop to 1.5 fps. So my first question is what am I doing that I shouldn’t do? I’m sure there are much better ways, but being new I have not discovered them yet. Any pointers in this specific case where I plot a static surface?

In the real situation I will be getting new rows (arrays ) of Y data every 50ms. What I thing that should be done is instead of plotting everything all over again, I should “Push” the first two rows to the back of the screen and then just add the next row in front. How does one do this? Translate? What happens when I reach the back of the scene? Do I start deleting rows or is there a way that OpenGL will do this for me?

Like I said, I’m a total beginner in OpenGL but some hints would be great.

Thank you , Tom