Hi guys,

What are your favorite methods to stitch separate meshes?

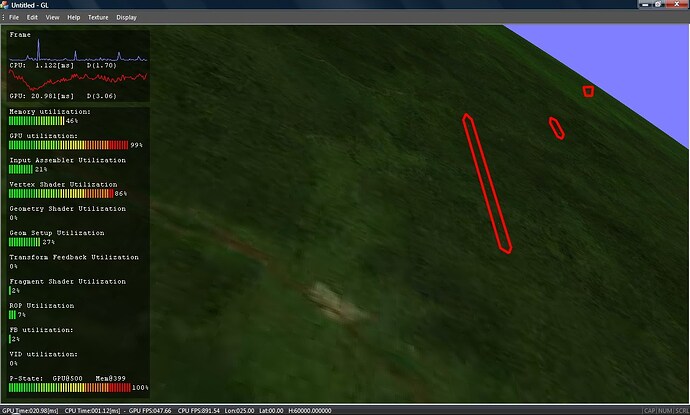

In the following figures I have demonstrated the problem.

Fig.1

Fig.2

The rasterization misses some pixels at the borders. The problem can be overcome by overlapping or rendering additional transition area.

Is there any less expensive method?

Thank you in advance!

P.S. Sorry for the links. They are fixed now.

To my knowledge if the edge has vertices with exactly the same xyzw values, it should not happen.

A workaround is, as a preprocessing step, to “weld” vertices to the same values if they are under some small threshold distance.

Yes, welding is a possible and easy to implement solution, but in a case when meshes are prepared on the CPU. In my case, meshes are generated in vertex or tessellation shaders. Writing values for the bounding vertices in a buffer/texture and reading back is possible, but seems to be an expensive operation since it complicates testing and branching. It has to be applied to all vertices (possible over a million).

The calculation producing the vertices is the same, but probably finite precision makes “negligible” differences that make pixels disappear.

I would be more concerned that your tessellation shaders aren’t producing consistent results. Is your code designed to produce the exact, binary identical value for boundary interpolated vertices, regardless of which side they’re on?

In the example shown above, the geometry is generated in a vertex not in a tessellation shader (please take a look at “Vertex Shader Utilization” equalizer). The values generated by VS are not binary identical. What I can achieve is the relative error less than 10e-5, but obviously it is not enough.

Interestingly, by multiplying values of all calculated coordinates with 1.0001 artifacts disappear on the current machine. But this doesn’t mean they wouldn’t appear in some other situations.

Is there any way to ensure rounding up for FP calculation in shaders?

It is surprising that your vertex shader does not give the same results for the same inputs.

Can you post it ? Or bissect it to reveal which part makes these small relative errors ?

The values are not the same since they are generated under different conditions. Each patch is generated according to its central point and then rotated/translated to its final destination.

The process of generation is quite precise. Although they are created under different condition, the values are exactly the same (I haven’t been aware of that till now). But, model-view-projection matrix makes differences.

For example, the upper right vertex of the left patch has the following coordinates for gl_Position:

x = -346.87967 (xn = -0.35223162)

y = -828.29907 (yn = -0.84107876)

z = 784.84100 (zn = 0.79695016)

w = 984.80566 (wn = 1.0)

The upper left vertex of the right patch has the following coordinates:

x = -346.87961 (xn = -0.35223153)

y = -828.29907 (yn = -0.84107876)

z = 784.84106 (zn = 0.79695022)

w = 984.80560 (wn = 1.0)

Although similar they are not the same. The differences are on the less significant figures of each number (before normalization), but that is the maximum precision FP can afford. (xn, yn, zn) are normalized coordinates (assuming the division is done with the same precision as other FP operations). MVP matrix is calculated on the CPU using double precision, but casting to FP also generates errors.

If you’re using different MVP matrices for the different patches, you will always have these errors that may need to be masked in some way to make a little less obvious (eg. overlapping geometry/ background cleared to similar color/ use higher precision).

To eliminate the errors entirely you’d need to use the same MVP matrices for each patch/model, and even then you’d have to be careful when trying to match up edges that you match them precisely. Min-Max representation of patches might be better than centre coord + width/height.

eg. A patch defined with a centre coord (1,1) and dimensions (0.1, 0.1) might not match up exactly with a patch at (1, 1.2) with dimensions (0.1, 0.1) since (1 + 0.1) != (1.2 - 0.1) when you are dealing with floating point. If the patches were defined as the min/max points, then a patch defined with min=(0.9, 0.9) and max=(1.1, 1.1) and one defined min=(1.1,0.9) and max=(1.3, 1.1) would match up.

Thanks for the advice Dan, bat there are some problems that cannot be solved easily.

Exactly! Overlapping is the first solution came to my mind, but until artifacts appear again, I’ll keep ignoring the problem.

Having the same MVP matrix is impossible since the patches have to be placed on different positions.

In my case both representation generate the same issue. Even if four corner vertices have the same coordinates in DP, the other vertices on the edges will slightly differ.

On the other hand, math for positioning vertices is quite complicated. I had to implement my own functions for trigonometry in order to achieve proper precision. For example, I can position viewer on 1 micron above the surface with the radius of medium-size planet (like Earth) and have no artifacts (except missing pixels). On 10 micron height even these artifacts disappear. All this is possible with ordinary FP precision without simulating DP. Even I was amazed when saw for the first time (last year) that this is possible with the single shader whether the viewer is a million kilometers above Earth, or just few microns.

Are you sure it is a problem that the 1 µm view of earth has a few pixels off ? I have difficulty to imagine a real use case.

Nope! That’s just “the demonstration of the power”.

Missing pixels appears on arbitrary height without correction, but with the correction they are visible only on the height around 1 µm. Using standard approach generate artifacts at about 30000m. At 3000m blocks start falling apart. I’ll make movies to demonstrate this. My approach enables more that 8 orders of magnitude better precision. Of course, it is not a regular use-case, but … as I’ve already said…