Hello,

I start with Vulkan and I notice that the drawing method I use is proportionally slow to the number of objects to render. For example, it may take more than a second to refresh the view. Exactly the same test with OpenGL is instantaneous.

I use LWJGL (therefore Java) and GLFW on MacOs (Radeon Pro 580 8 Go).

It seems obvious that I am doing it wrong but I do not see how to do otherwise better.

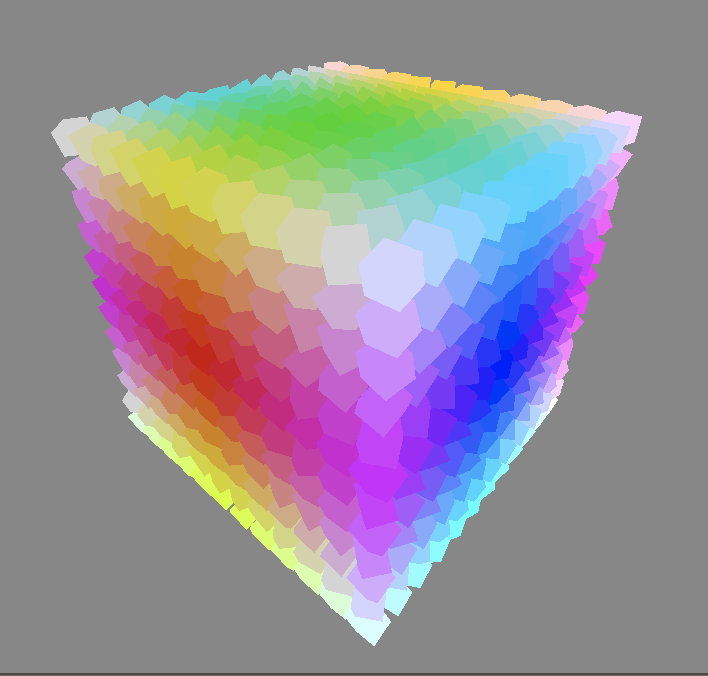

The test consists in displaying 1728 cube (12 ^ 3) and playing on the parameters of the camera to refresh the view.

For rendering, between the vkCmdBeginRenderPass and vkCmdEndRenderPass functions I use a loop that goes through each cube. The bindings on the DescriptorSet, VertexBuffer and IndexBuffer are invoked then we call vkCmdDrawIndexed (). We start again for the next cube and so on.

There is only one dynamic DescriptorSet which contains the model view projection matrices of each cube (offset addressing) so that the transformation is done in the vertex shader.

The vertices and colors of all cubes are in a single VertexBuffer (addressing by offset) and the indexes are also in a single IndexBuffer (addressing by offset). The indexes point from the position of the cube offset in the VertexBuffer and not from the start. That is to say that for a cube (8 vertices) the indexes of the first will go from 0 to 7, the same for the second cube and so on.

The loop agorythm would be:

for each cube { offsetUbo = cube.getOffsetUbo () offsetVertex = cube.getOffsetVertex () offsetIndex = cube.getOffsetIndex () cubeIndexSize = cube.getIndexSize () vkCmdBindDescriptorSets (renderCommandBuffer, VK_PIPELINE_BIND_POINT_GRAPHICS, pipelineLayout, descriptorSets, offsetUbo) vkCmdBindPipeline (renderCommandBuffer, VK_PIPELINE_BIND_POINT_GRAPHICS, pipeline) vkCmdBindVertexBuffers (renderCommandBuffer, 0, vertexBuffer, offsetVertex) vkCmdBindIndexBuffer (renderCommandBuffer, indexBuffer, offsetIndex, VK_INDEX_TYPE_UINT16) vkCmdDrawIndexed (renderCommandBuffer, cubeIndexSize, 1, 0, 0, 0) }

It turns out that invoking these functions in a loop has a very high cost. with by order of magnitude:

vkCmdBindDescriptorSets, vkCmdDrawIndexed, vkCmdBindPipeline, vkCmdBindVertexBuffers and vkCmdBindIndexBuffer.

In front of this observation, it becomes obvious that it is necessary to do without the loop and certain functions seem studied for that but I do not see how to do it.

I thought of filling the indexBuffer with indices which point since the beginning of the vertexBuffer and by transmitting to vkCmdDrawIndexed the sum of the indices of all the cubes. It works for the same unique vertex shader because I don’t see how to link to the descriptorSet.

If anyone has an idea, it is welcome (and they will have won an image ![]() ).

).