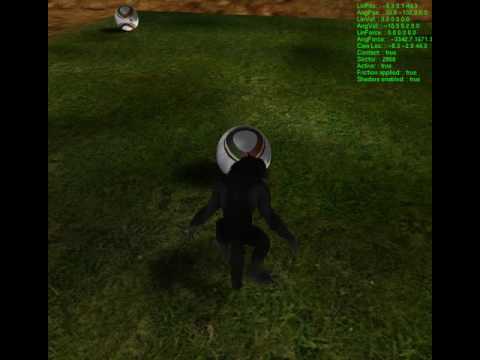

For some reason I can’t figure out why I am getting this self shadowing effect even when I am culling the front faces to prevent self shadowing. Maybe from looking at this sample you can tell what is actually happening here…

I am using an orthographic projection as such

glm::ortho(-1000.0f, 1000.0f, -1000.0f, 1000.0f, 1.0f, 10000.0f)which I am worried that potentially the near and far plane could cause depth buffer issues. I am then using

glm::lookAt(lightPosition, glm::vec3(0.0f), glm::vec3(0.0, 1.0, 0.0)) which orients the orthographic projection to the light’s direction.

My depth texture resolution has a very high resolution which is 8 times the size of the resolution of my viewport. Increasing the resolution of the depth texture improves the resolution of the shadows for me. I then cull front faces and draw the vertices in my shader and store it to the framebuffer which is attached to a texture.

I can display my depth texture and everything looks fine…perfect actually. I then switch back over to back face culling and do the actual render. What bothers me is that the landscapes do not seem to suffer from self shadowing while the sphere and rocks suffer from this “self shadowing”.

One thing I know is that the landscapes are planes which I am either looking at it’s back or front plane while the rocks and spheres will always have possible back and front planes from any orientation looking at them. Any ideas will help. I’m glad I fell upon this problem because it taught me a lot about how shadows are created but this is just not fun anymore.

Thanks