Hello !

For an OpenGL application I need to be able to copy an image onto another using Render-to-Texture. Here is the section of code in question (note that I’m using JOGL, a Java OpenGL wrapper, but OpenGL should work the same as in C) :

Code section

this.context.gl.glBindFramebuffer(GL.GL_FRAMEBUFFER, context.getTextureRenderer());

this.context.gl.glFramebufferTexture2D(GL.GL_FRAMEBUFFER, GL2.GL_COLOR_ATTACHMENT0, GL.GL_TEXTURE_2D, texture.get(0), 0);

this.context.gl.glDrawBuffer(GL2.GL_COLOR_ATTACHMENT0);

this.context.gl.glBindTexture(GL.GL_TEXTURE_2D, src.getTexture());

float sx0 = getXTex(src, sx);

float sy0 = getYTex(src, sy);

float sx1 = getXTex(src, sx + sw);

float sy1 = getYTex(src, sy + sh);

float dx0 = getXScreen(dx);

float dy0 = getYScreen(dy);

float dx1 = getXScreen(dx + dw);

float dy1 = getYScreen(dy + dh);

float actual_sx0 = max(sx0, 0);

float actual_sy0 = max(sy0, 0);

float actual_sx1 = min(sx1, 1);

float actual_sy1 = min(sy1, 1);

float actual_dx0 = map(map(actual_sx0, sx0, sx1, 0, 1), 0, 1, dx0, dx1);

float actual_dy0 = map(map(actual_sy0, sy0, sy1, 0, 1), 0, 1, dy0, dy1);

float actual_dx1 = map(map(actual_sx1, sx0, sx1, 0, 1), 0, 1, dx0, dx1);

float actual_dy1 = map(map(actual_sy1, sy0, sy1, 0, 1), 0, 1, dy0, dy1);

this.context.gl.glColor4f(1f, 1f, 1f, 1f);

this.context.gl.glBegin(GL2.GL_QUADS);

this.context.gl.glTexCoord2f(actual_sx0, actual_sy0);

this.context.gl.glVertex2f(actual_dx0, actual_dy0);

this.context.gl.glTexCoord2f(actual_sx0, actual_sy1);

this.context.gl.glVertex2f(actual_dx0, actual_dy1);

this.context.gl.glTexCoord2f(actual_sx1, actual_sy1);

this.context.gl.glVertex2f(actual_dx1, actual_dy1);

this.context.gl.glTexCoord2f(actual_sx1, actual_sy0);

this.context.gl.glVertex2f(actual_dx1, actual_dy0);

this.context.gl.glEnd();

this.context.gl.glFlush();

this.context.gl.glBindTexture(GL2.GL_TEXTURE_2D, 0);

this.context.gl.glFramebufferTexture2D(GL.GL_FRAMEBUFFER, GL2.GL_COLOR_ATTACHMENT0, GL.GL_TEXTURE_2D, 0, 0);

this.context.gl.glBindFramebuffer(GL.GL_FRAMEBUFFER, 0);

this.context.gl.glDrawBuffer(GL.GL_FRONT_AND_BACK);

When calling this specific function with a destination size larger than the screen size, the image seems to be “cut off”, as if the pixels were simply not rendered “outside of the screen”, even though it is on an image that itself is larger than the screen.

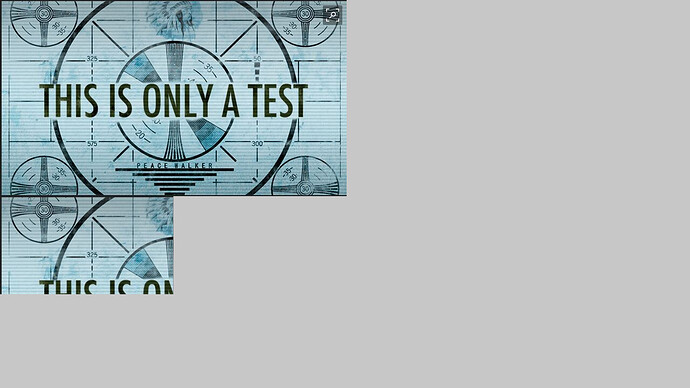

Resulting image

The top image is the input, and the bottom one is a resized version of the input, to double the screen width and height, and then rendered onto the screen at the same size as the input. I would in this case want both images to be rendered as the same (although in memory the bottom one would be stored onto a higher resolution image).

I looked un both the glScissor and the glViewport functions, but as far as I understand the glViewport function only modifies the pixel coordinates transform and shouldn’t cause this issue, but disabling the GL_SCISSOR_TEST should act as if the scissor box encapsulates the whole window and disables rendering outside of it.

This is why I also tried setting the scissor box to a larger size than the screen size and enabling the scissor test, but this seems to change nothing.

Is there anything that may cause rendering to fail in this case ? Perhaps if the coordinates are outside of the range [-1, 1] nothing is rendered and a glViewport call is mandatory ?

Thanks !