Hi!

Im trying to apply this radial shader in multiples spheres, but unsuccessfully.

the problem is related with screen coordinates. The shader only works if the sphere is in the center of the screen, just like show this pictures:

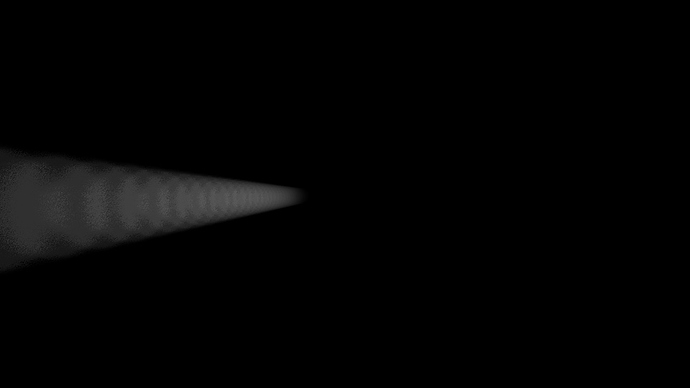

but if the center is not align to camera, this is what happen:

as you see, you can see the samples compute in the fragment shader:

#version 150

in vec2 varyingtexcoord;

uniform sampler2DRect tex0;

uniform sampler2DRect depth;

uniform vec3 ligthPos;

float exposure = 0.19;

float decay = 0.9;

float density = 2.0;

float weight = 1.0;

int samples = 25;

out vec4 fragColor;

const int MAX_SAMPLES = 100;

void main()

{

// float sampleDepth = texture(depth, varyingtexcoord).r;

//

// vec4 H = vec4 (varyingtexcoord.x *2 -1, (1- varyingtexcoord.y)*2 -1, sampleDepth, 1);

//

// vec4 = mult(H, gl_ViewProjectionMatrix);

vec2 texCoord = varyingtexcoord;

vec2 deltaTextCoord = texCoord - ligthPos.xy;

deltaTextCoord *= 1.0 / float(samples) * density;

vec4 color = texture(tex0, texCoord);

float illuminationDecay = .6;

for(int i=0; i < MAX_SAMPLES; i++)

{

if(i == samples){

break;

}

texCoord -= deltaTextCoord;

vec4 sample = texture(tex0, texCoord);

sample *= illuminationDecay * weight;

color += sample;

illuminationDecay *= decay;

}

fragColor = color * exposure;

}

the shader has an uniform input called ligthPos. I discovered a function in openframeworks called cam.worldToScreen(); if i send the result of this function to the shader, then the sphere is moving in all over the screen without problem. The problem is that i want more than just one sphere, so the logic of this shader is not correct. What you suggest? i want to apply this radial blur (god ray style) to a undefine number of spheres and other shapes. this is an example:

what do you think? i notice that the post processing shader (in the first pass i render the geometry, then i pass that buffer to a other fbo with this shader) affects the all screen, but i need a method to fix this. please, i need suggestions. im new in shader, so a didactic example would be great. thanks! O.