Hello,

I have played around with 3d development for some time, and have also recently started looking at shaders. In computing of refraction I have come across an issue related to matching of depth texture fragments and color texture fragments that I don’t know the cause for. I have made a small example illustrating the issue.

In my example I have a box behind a quad in the scene. The box has a blue material, and the quad has my own material (MyMaterial). As an input to the MyMaterial shader I have a framebuffer with a depth texture and a color texture that is rendered from a camera (refractionCam) that sees only the blue box on a black background.

In the MyMaterial shader i expect the depth texture fragments to match the color texture fragments. And in the shader I have a check to verify that if i detect the blue color (box), then the depth should be small. If i detect a black color (background), the depth should be large. If this is not fulfilled then I display a red or white pixel depending on the fault.

Shaders

Vertex:

uniform mat4 g_WorldViewProjectionMatrix;

attribute vec3 inPosition;

varying vec4 viewCoords;

void main() {

vec4 vVertex = vec4(inPosition, 1.0);

viewCoords = g_WorldViewProjectionMatrix * vVertex;

gl_Position = viewCoords;

}

Fragment:

uniform sampler2D m_refraction;

uniform sampler2D m_depthMap;

varying vec4 viewCoords;

void main() {

vec2 projCoordDepth = viewCoords.xy / viewCoords.q;

projCoordDepth = (projCoordDepth + 1.0) * 0.5;

//Include distortion to reveal "bug"

projCoordDepth += vec2(0.01, 0.0);

projCoordDepth = clamp(projCoordDepth, 0.0, 1.0);

// Calculate depth from camera plane to depthMap

float z_b = texture2D(m_depthMap, projCoordDepth).r;

float z_n = 2.0 * z_b - 1.0;

float depth = 2.0 * 1.0 * 1000.0 / (1000.0 + 1.0 - z_n * (1000.0 - 1.0));

vec4 fragColor = vec4(0.0, 0.1, 0.0, 1.0);

vec4 textureColor = texture2D(m_refraction, projCoordDepth);

if (textureColor.b > 0.1) {

if (depth > 50.0) {

//indicates bug - display red pixel

fragColor = vec4(1.0, 0.0, 0.0, 1.0);

} else {

//modify blue color if blue box detected

fragColor.b = 0.3;

}

}

if (textureColor.b < 0.1) {

if (depth < 50.0) {

//indicates bug - display white pixel

fragColor = vec4(1.0, 1.0, 1.0, 1.0);

} else {

//detected black background, modify green component

fragColor = vec4(0.0, 0.1, 0.0, 1.0);

}

}

gl_FragColor = fragColor;

}

The fragment that is read in both the depth texture (m_depthMap) and color texture (m_refraction) is given by projCoordDepth.

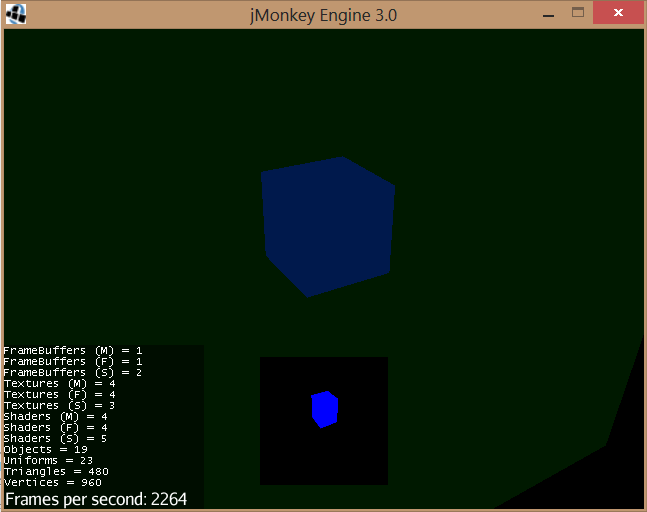

This works fine (no red or white fragments) when I don’t include the “projCoordDepth += vec2(0.01, 0.0);” in the fragment shader:

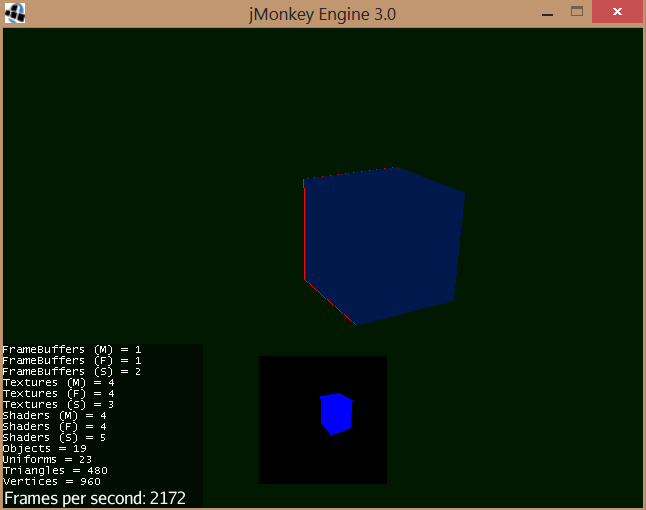

But when I include the modification “projCoordDepth += vec2(0.01, 0.0);”:

The red color indicates that the fragments of the color texture and depth texture do not “match” even though I use the same coordinate input to both textures.

What could be the reason for this?

It is maybe also worth noting that this doesn’t happen for all modifications of the texture coordinate. If I for example use “projCoordDepth += vec2(0.2, 0.0);” there are no red or white fragments.