Hello.

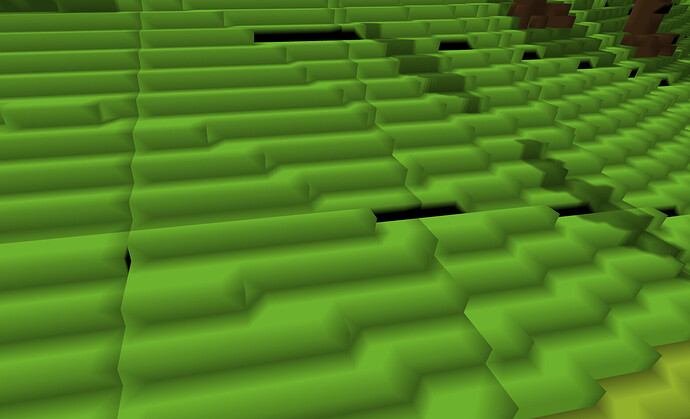

I used cubes with only 8 vertices in my project and the problem occurred with the directional light. You can see the transitions between the chunks. Complicated geometry also spoils the normal. Is there any alternative to directional light? Do you know any tricks to deal with this problem?

I found your problem. If you want proper lighting, then you need more vertices with normals that actually match the cube’s surface.

I know that’s the problem. However, 8 is not 24. The gain in operating speed is worth it. I set myself the goal of speed at the expense of the rest of the effects. I wonder if you can’t simulate such light with shadow mapping? I’ll probably have to apply another render, and that’s not very good. It is bad either way.

I’m sure you have profiling data to back that up, yes? And you have made your normal data as small as possible, right? There’s no reason a normal should take 3 floats when 4 bytes is sufficient. And you probably don’t need full floating-point precision for the position data either.

Plus, you’re probably going to want to put textures on those faces, so you’re going to need to split those vertices regardless.

But if you absolutely insist on not adding more vertices, you can compute vertex normals in the fragment shader by using dFdx and dFdy on the vertex position. This position needs to be in whatever space your lights are in. To compute the normal, you take the cross product of the results of those functions and normalize it.

Shadow maps are for determining whether a point in space can see a particular light. They can’t tell you any characteristics about the surface at that point in space.

No. There will only be colors.

You mean something like this?

vec 3 normal = normalize (cross (dFdx (pos), dFdy (pos)));

This is the first time I encounter these functions. I thought normal could only be set in the geometry shader.

You don’t need 24 distinct vertices. If a vertex shader output / fragment shader input has the “flat” qualifier, the value from the last(*) vertex of the triangle is used for every fragment, rather than interpolating between the values from 3 vertices.

(*) In OpenGL 3.2+, you can use glProvokingVertex to select either the first or last vertex of a primitive.

Provided that the cubes have the correct topology, you only need to assign the face normal to one of the four vertices for that face (specifically, to one of the two vertices which is used by both triangles), then order each triangle’s vertices so that the last vertex is the one with the correct normal.

This is likely to be more efficient than calculating the face normal per-fragment.

For an arbitrary triangle mesh, you typically end up needing twice as many normals as vertex positions (the number of faces is asymptotically twice the number of vertices), but for quads you always have a pair of triangles sharing each normal so it’s possible to get 1:1.

This way is great. You don’t have to worry about the normal ones. Unfortunately, I am concerned that since this computation is in the fragment shader it can slow everything down. Because the shader of fragmetns does a lot of calculations. The profit from a small number of vertices can be illusory.

This is also a way out, but my geometry uses common vertices in all chunks, and many normal ones can be overwritten. I would have to give up processing and optimization of geometry.

The question is what will put less load on the GPU?

Way:

vec 3 normal = normalize (cross (dFdx (pos), dFdy (pos)));

With a small number of vertices.

Or the way to:

glProvokingVertex

But I would have to increase the number of vertices 2-3 times.

You’re doing a couple of subtractions, a cross-product, and a reciprocal-square-root. I don’t think you’re going to notice. Not unless you have massive overdraw, which is a problem we already know how to solve with other tools.

I will try to transfer as many calculations to VS.

Thanks for your help. Not only did I solve the problem, but I also learned a lot.

This topic was automatically closed 183 days after the last reply. New replies are no longer allowed.